Written by:

Afrah Fazlulhaq

Reviewed By:

Joy Jathinson

TL;DR

- AI systems rely on simplified retrieval, meaning they often see only fragments of your site, not the full experience.

- Headers like Accept-Language are often missing or generic, so websites end up serving the wrong content or limiting access.

- If your content isn’t directly accessible, clearly structured, and available without relying on headers, redirects, or dynamic layers, it often doesn’t get seen.

- Even top-ranking pages can be missing from AI answers if their content isn’t easily retrievable and structured at the passage level.

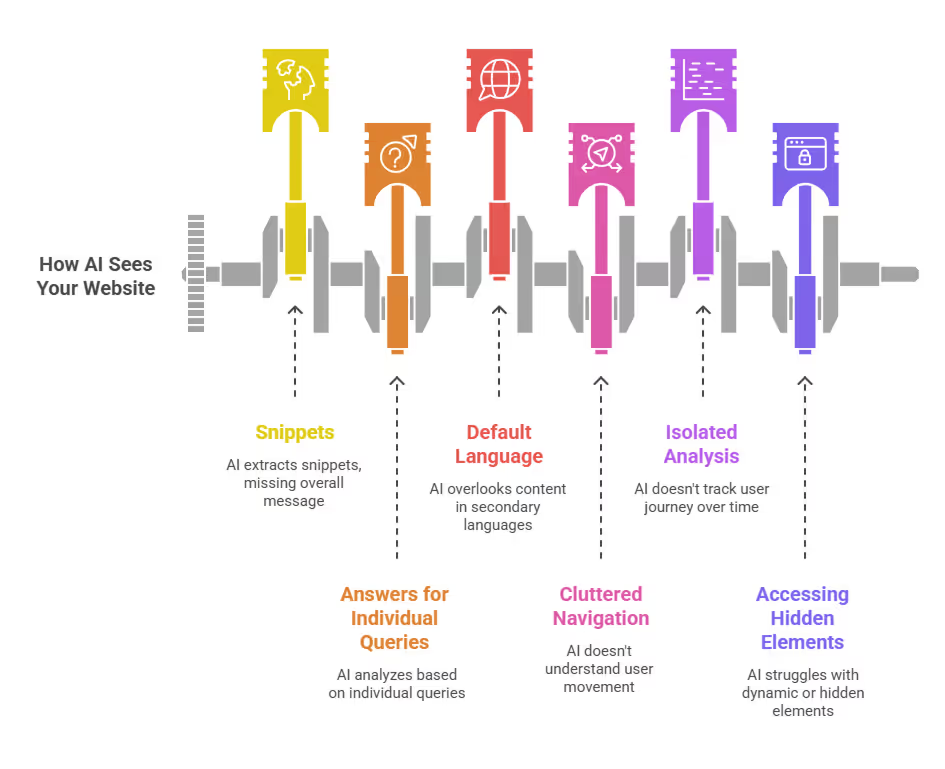

By now, AI agents are already changing how people find and evaluate websites, and with that, the idea of crawlability has quietly shifted too. Most of us still think in terms of Google, if a page is indexed, ranking, and technically sound, it should be discoverable. But AI systems don’t always move through the web the same way. They don’t always follow the same signals, they don’t interpret structure the same way, and in many cases, they’re working with a much thinner, simplified version of your site than you’d expect.

In banking, this might mean a customer asking an AI assistant for “best savings accounts in Australia” never sees your product page, even if it ranks on Google, simply because the AI couldn’t access the right version of it.

In hospitality, it shows up when a luxury villa ranks well for “private villas in Galle”, but AI assistants consistently cite aggregators instead because the villa’s actual site content isn’t easily retrievable. We analyzed 200 long-tail hospitality prompts from 4 markets and found that Sri Lanka, despite being a favoured tourist destination, had OTAs dominating answers due to unstructured websites.

How AI Crawlability Differs from Traditional SEO

For years, technical SEO has been built around a fairly stable model: browsers request pages, Googlebot crawls them, and rankings follow from how well your site is structured, linked, and optimized.

As AI systems don’t follow that model, they don’t consistently navigate from page to page, interpret UI elements, or behave like a user session. Instead, they rely on retrieval systems that extract content in fragments, often through simplified HTTP requests, cached indexes, or third-party sources. This means traditional crawling was about coverage, how much of your site gets indexed.

For example, a bank’s loan eligibility page might be fully optimised for SEO, but if key details are hidden behind tabs or dynamically loaded sections, an AI system may only extract the introduction, missing the actual eligibility criteria.

In contrast, a simpler third-party comparison site with static, clearly structured content becomes easier for AI to retrieve and cite, even if it’s less authoritative.

This is why many SEO assumptions start to break in AI environments, where GEO and SEO start to diverge in a very real way. A page can rank well, drive traffic, and still be invisible in AI-generated answers, because the system retrieving information never accessed it properly in the first place.

What Happens When AI Crawlers Don’t Send Signals

One of the biggest misconceptions in modern web architecture is that crawlers behave like users or browsers. In reality, most AI and search crawlers operate with minimal context.

Across multiple studies and crawler analyses, a few consistent patterns show up:

- Many crawlers don’t send Accept-Language headers at all

- When they do, it’s often a default like en-US,en;q=0.9

- Headers rarely reflect user intent, query context, or geography

- Behaviour varies widely between bots, there is no standard

Google itself has confirmed that Googlebot does not use Accept-Language for crawling decisions, and instead relies on URL structure and hreflang signals. Similarly, AI crawlers like OpenAI’s GPTBot or others often send minimal or no localization signals at all.

What this means in practice is simple:

Your website is making decisions for AI based on signals AI never meant to send.

How Do These Signals Affect Brands

# What your server expects (ideal scenario)

Request:

GET /health-insurance

Accept-Language: en-AU

IP: Australia

→ Response:

Serve Australia-specific health insurance plans

- AUD Pricing

- Medicare-aligned Coverage

- Local Hospital Network

# What AI crawler actually sends

Request:

GET /health-insurance

Accept-Language: en-US,en;q=0.9

IP: Unknown / Data center

→ Response:

Serve default/global page (no AUD pricing, generic benefits)What this means:

AI often fails to see your AU-specific plans, local pricing or key differentiators. So it can't mention them in answers.

In industries like health insurance, where products, pricing, and coverage vary significantly by region, this gap becomes critical. AI systems aren’t choosing to ignore your local offerings, they’re simply never seeing them.

Across the prompts and platforms we track, we consistently see AI systems defaulting to simplified retrieval signals rather than context-aware requests. In many cases, the request itself carries almost no meaningful information about the user or the query context.

As our GEO specialist, Joy puts it:

“Most AI crawlers aren’t trying to understand your audience, they’re just trying to extract something usable. If your site relies on context signals like language or location, there’s a high chance AI is missing the point entirely.”

This becomes critical in industries like finance, where content varies by market. A bank operating across UAE, and Singapore may rely on language or region detection to serve the right product details.

But if an AI crawler sends no clear location or language signal, it may only access one default version, meaning entire regional offerings never enter AI-generated answers.

Is Your Website Responding to the Wrong Signals?

Modern websites are built to respond dynamically. Depending on who visits, where they’re from, and what headers they send, the server might:

- Redirect to a different language version

- Serve region-specific content

- Trigger personalization layers

- Modify page structure or content

All of this makes sense for real users. But for AI crawlers, it creates friction. If a crawler sends a generic or empty header, your site may redirect it incorrectly, serve the wrong version of content or block access to certain variations entirely.

This is especially common with:

- Accept-Language based redirects

- Geo-IP routing

- Client-side personalization

What happens then is that your website is behaving correctly for users, but incorrectly for AI. In hospitality, this often appears in multilingual or geo-routed villa websites. A user from Europe might see a beautifully localised version of the site, but an AI crawler hitting the same URL may be redirected differently or served a generic version.

As a result, the content that actually converts guests never becomes part of AI retrieval, while global platforms with static, standardised pages dominate visibility.

Is AI Seeing Your Full Website?

When we talk about crawlability today, we often assume completeness, that bots eventually see everything. Whereas AI systems typically operate with limited or shallow retrieval passes, limited crawl depth and pre-indexed or sampled data.

Think of it like this: A hotel website might include room details, experiences, and pricing across multiple pages and interactions. But to an AI system, it may only appear as a single paragraph pulled from one page, without pricing context, availability, or unique selling points.

AI works on what it can access in one pass. This creates what we can call a “partial website” problem, where the version of your site that exists for AI is incomplete, fragmented, and sometimes misleading.

Where Your Content Disappears

Once you understand this, it becomes easier to see where content gets lost.

1. Header-dependent content

If your content delivery relies on headers like Accept-Language or Accept, you’re assuming the crawler is sending meaningful signals. In reality, most AI crawlers either omit these headers or default to generic values like en-US, which don’t reflect user intent. As a result, the server may serve the wrong version of a page, or never expose the intended one at all, leaving entire variations of your content unseen.

For example, a multilingual banking site may serve Sinhala or Tamil versions based on headers, but if AI defaults to English, those versions may never be accessed.

2. Redirect-dependent content

When content is heavily dependent on 301/302 redirects or geo-based routing, crawlers often don’t experience the same journey a user would. Multiple redirect layers can cause bots to drop off early, follow unintended paths, or only access a single version of a page. Over time, this can lead to sections of your site being inconsistently crawled, misrepresented, or completely missed.

A hospitality site redirecting users from / to /en/ or /fr/ may unintentionally create dead ends for AI crawlers that don’t follow the full redirect chain.

3. JavaScript-rendered content

Google has invested heavily in rendering JavaScript, but many AI systems have not.

Studies by platforms like Ahrefs show that client-side rendering still introduces delays in indexing, and not all bots execute JS reliably. If key content loads after initial HTML, it may be missed by crawlers.

Many modern fintech platforms load rates, calculators, or offers dynamically, meaning AI may never see the actual numbers users care about.

4. Gated or restricted content

Any content that sits behind forms, authentication layers, or region-based access controls is effectively out of reach for AI systems. Unlike users, crawlers don’t log in, submit forms, or navigate restrictions, which means valuable content in these areas is often completely excluded from AI retrieval and, by extension, from AI-generated answers.

Banks often place detailed product breakdowns behind forms or login areas, making that information completely invisible to AI systems.

The Visibility Gap

It’s tempting to treat this as a technical crawl problem, but it is a discovery problem. AI-generated answers are built through:

- Retrieval

- Selection

- Synthesis

If your content doesn’t make it through step one, retrieval, it never enters the system.

And if it never enters the system it doesn't exist in AI answers.

In our recent work across five Hospitality clients in APAC, we found that even when brands ranked on page one for high-intent queries, they appeared in only 30–40% of relevant AI prompts.

The gap was clearly accessibility. Content existed, but parts of it weren’t being retrieved or surfaced consistently by AI systems, limiting how often those brands showed up in AI-generated answers.

How AI Extracts Passages

Another major shift is how content is processed. Search engines traditionally index pages, but AI systems extract passages. This changes how content needs to be structured.

As we’ve analyzed before, AI prefers clear, self-contained explanations, structured headings, direct answers, and logically segmented content.

Because retrieval happens at the passage level, not page level, even a well-ranking page can fail if the relevant section isn’t clearly defined, content is buried in dense paragraphs, and structure is unclear.

This is why content clarity and accessibility matter more than ever.

This Problem Gets Worse in Multilingual Websites

Everything we’ve discussed so far becomes even more complex when multilingual setups are involved. Now you’re not just dealing with crawlability, structure, and rendering. You’re also dealing with language routing, header dependencies, canonical conflicts, and hreflang interpretation.

And because AI crawlers don’t reliably interpret these signals, entire language versions of your site can become invisible. What was meant to expand your reach can end up limiting your visibility.

In Part 2, we’ll break this down further, and show how multilingual websites, despite being one of the strongest advantages in global SEO, are often the most affected when it comes to AI discovery.